Statistical tests are used for testing the hypothesis to statistically determine the relationship between the independent and dependent variables, along with statistically estimating the difference between two or more groups.

A null hypothesis is a statement for no link and relationship or difference between different groups that are assumed in the statistical testing. The null hypothesis test determines if the data’s values fall outside the range predicted through the null hypothesis.

In this article, we will discuss the different aspects of statistical tests, including the selection of parametric and nonparametric tests to understand the statistical testing in detail.

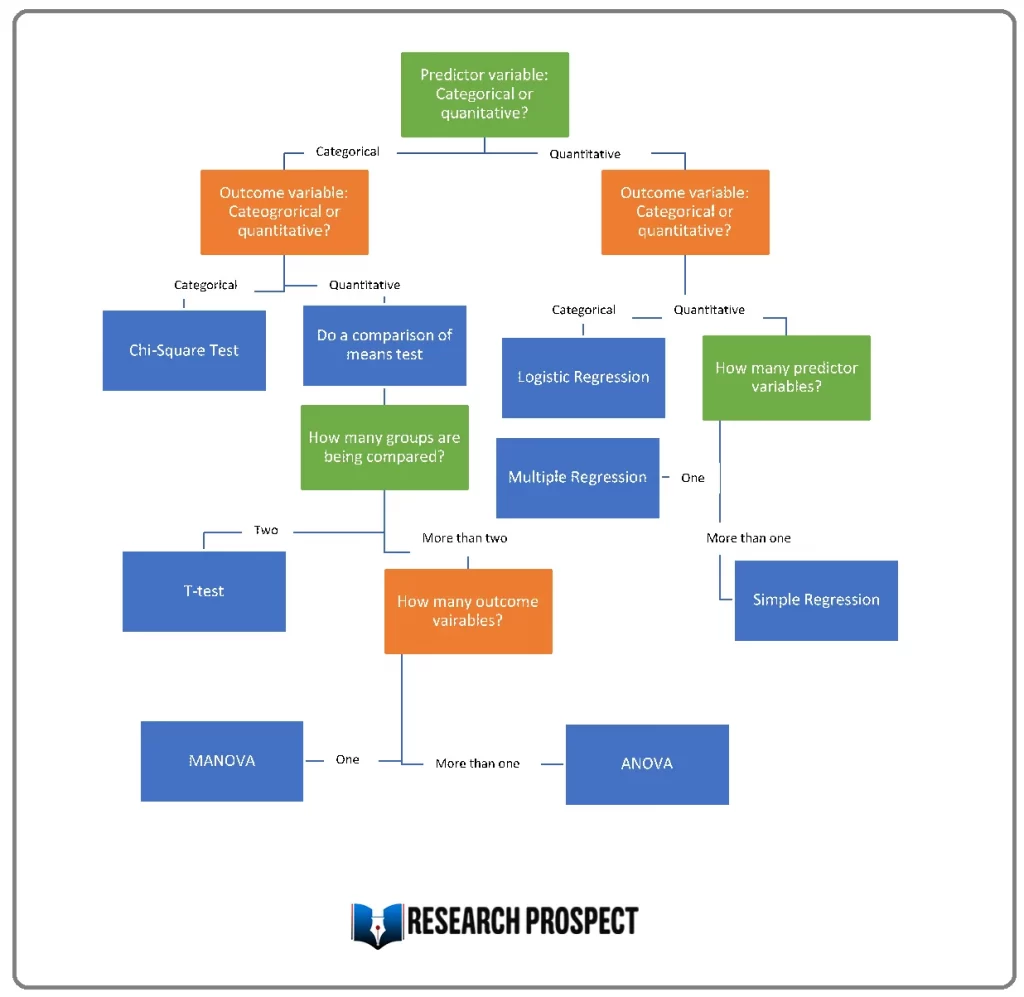

Do you already know the type of variable you are dealing with? If yes then you can make use of the below flowchart to select the correct statistical test for your data.

Application of Statistical Tests

According to Greenland et al. (2016), the statistical tests calculate a value that explains the extent of difference between the tested variables with the null hypothesis. Moving further, it calculates the probability value, which estimates the probability for the visibility of the difference in case of acceptance of the null hypothesis.

The relationship would be significant if the value of the test statistic is higher than the value calculated from the hypothesis of no relationship. Similarly, the relation would not be statistically significant if the value is on the lower side.

When to Conduct the Statistical Testing

When the data is collected in a statistically valid manner, the statistical tests can be performed. Using probability sampling methods, the statistical data can be collected in different ways, such as experiment or observation.

The larger sample size increases the validity of the data because it would correctly represent the population under study. Before selecting a statistical test for the collected data, it is important to meet certain assumptions and understand the types of variables used in the study.

The first assumption about the data is that the observations or variables included in the data are not related.

The second assumption is that there is a homogeneity in terms of variance, as all groups have the same variance when compared with each other.

The third assumption made is about the normality of data that is mainly applied to quantitative data. A data that does not fulfill the assumptions of normality and homogeneity; nonparametric tests would apply to such data (Awang, Afthanorhan, and Asri, 2015).

According to Bevans (2020), the determination of the type of variables leads to the identification of a suitable statistical test on the collected data. Quantitative and categorical variables are the major types of variables that help determine the suitability of the tests.

The quantitative variable shows the number of any object that can further be classified as continuous and discrete variables. When the measure can be divided into units that are lesser than one, it is a continuous variable such as .5 grams of gold.

When the measure cannot be explained in a value of less than one, the variable is discrete such as there is 1 car. Categorical variables describe the group of different objectives that are further divided into ordinal, nominal, and binary variables.

The representation of data with an order or a ranking system is ordinal, representation with the names of the group is nominal, and the representation in the form of outcome is a binary variable.

Not sure which statistical tests to use for your data?

Let the experts at ResearchProspect do the daunting work for you.

Using our approach, we illustrate how to collect data, sample sizes, validity, reliabilitay, credibility, and ethics, so you won’t have to do it all by yourself!

Selection of a Parametric Test

Bettany‐Saltikov and Whittaker (2014) stated that parametric tests develop some common assumptions about the data collected from the sample of the population. The parametric test has strict requirements and is applicable only in the case of meeting the mentioned assumptions.

Regression, comparison, and correlations are common types of parametric tests used to determine the relationship between variables.

The regression test determines the effect of one continuous or independent variable on the dependent variable in the study, ultimately identifying the cause and effect relationship.

The simple linear regression is applicable when there is one independent variable and one dependent variable. The multiple linear regression is applicable when there are two or more independent variables and one dependent variable (Kazmi et al., 2017).

Comparison tests determine the differences among the means of different groups by making a comparison as they are applied for testing the effect of a categorical variable on the value of the mean of another characteristic.

T-tests are applied to compare the mean value of different groups within a study, such as the average income of men and women (Kim, 2015). When the study compares the means of more than two groups, the t-test is not applicable, but ANOVA and MANOVA tests are used to determine such comparisons.

Bell, Bryman, and Harley (2018) stated that the correlation is a statistical test that determines the existence of the relationship between two variables. Still, it also finds out the strength if there exists a relationship. Within the correlation test, the Pearson Correlation is applied when the independent and dependent variables are continuous.

In the case of the categorical nature of both types of variables, the Chi-Square method is applied to determine the existence of the relationship between the variables.

Selection of a Nonparametric Test

Hair (2015) mentioned that when the collected data for the study does not meet all the assumptions, the nonparametric test might be used to study the variables. The less strictness of the nonparametric testing is the reason that the inferences from this test are not as strong as they are from the parametric test.

The Spearman test is used when both variables of the study are ordinal, as this test would be replaced with a correlation or regression test. In case the independent variable is categorical and dependent is quantitative, the sign test is suitable.

When there is 3 or more independent variable of categorial nature with the dependent variable of quantitative nature, the Kruskal-Wallis test the Kruskal-Wallis test is applied to develop the valid findings.

ANOSI test is used when there are three or more independent categorical variables and two or more dependent quantitative variables.

Wilcoxon Rank-sum test is suitable when there are two independent variables of categorical nature, and the dependent variable comes from different quantitative groups.

Wilcoxon Signed-rank test is also one of the types of nonparametric tests used when 2 independent variables are categorical, and different quantitative groups of dependent variables come from the same population (Bevans, 2020).

Conclusion

Statistical tests are useful for determining the relationship between the variables as they provide the statistical justification for the results. The statistical tests can be performed when the collected data is valid from a statistical perspective by meeting certain assumptions and understanding the types of variables used in the study.

The independence of observations, homogeneity of variance, and normality of the data are the three major assumptions that need to be fulfilled to apply the parametric tests. In the case of the shortcoming of any assumption, the nonparametric tests would be used.

The parametric tests provide strong inferences, and the common tests used are correlation, regression, and comparison tests. The nonparametric tests are less strict, and the common tests include Spearman, Sign test, ANOSMI, and Wilcoxon Rank-Sum test.

References

- Awang, Z., Afthanorhan, A., and Asri, M.A.M., 2015. Parametric and nonparametric approach in structural equation modeling (SEM): The application of bootstrapping. Modern Applied Science, 9(9), p.58.

- Bell, E., Bryman, A., and Harley, B., 2018. Business research methods. Oxford university press.

- Bettany‐Saltikov, J., and Whittaker, V.J., 2014. Selecting the most appropriate inferential statistical test for your quantitative research study. Journal of Clinical Nursing, 23(11-12), pp.1520-1531.

- Greenland, S., Senn, S.J., Rothman, K.J., Carlin, J.B., Poole, C., Goodman, S.N., and Altman, D.G., 2016. Statistical tests, P values, confidence intervals, and power: a guide to misinterpretations. European journal of epidemiology, 31(4), pp.337-350.

- Hair, J.F., 2015. Essentials of business research methods. ME Sharpe.

- Kazmi, R., Jawawi, D.N., Mohamad, R., and Ghani, I., 2017. Effective regression test case selection: A systematic literature review. ACM Computing Surveys (CSUR), 50(2), pp.1-32.

- Kim, T.K., 2015. T-test as a parametric statistic. Korean Journal of anesthesiology, 68(6), p.540.

- Sreejesh, S., Mohapatra, S., and Anusree, M.R., 2014. Business research methods: An applied orientation. Springer.

Frequently Asked Questions

Five common statistical tests are:

- T-test: Compares means of two groups.

- ANOVA: Analyzes variance among groups.

- Regression: Examines relationships between variables.

- Chi-square: Tests associations in categorical data.

- Pearson correlation: Measures linear relationships between continuous variables.