When testing your hypothesis, it is crucial to establish a null hypothesis. The null hypothesis proposes that there is no statistical or cause-and-effect relationship between the variables in the population. Commonly denoted by an H0 symbol, researchers work to reject the null hypothesis.

Similarly, they also come up with an alternate hypothesis, which they believe explains their research.

Let’s look at an example to explain null and alternate hypotheses,

Question: Is the COVID-19 vaccine safe for people with heart conditions?

Null Hypothesis: COVID-19 vaccine is not safe for people with heart conditions.

Alternate Hypothesis: COVID-19 vaccine is safe for people with heart conditions.

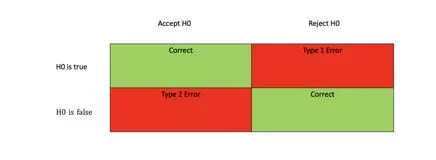

These statistical hypotheses rely on probabilities for their experiments. Even though they are meant to be reliable, there may be two kinds of errors that can occur when testing your hypothesis. These errors are known as the Type 1 error and the Type 2 error.

Understanding Type 1 Error

Type 1 errors are commonly known as false positives. A type 1 error occurs when a null hypothesis is rejected during hypothesis testing, even though it is accurate. In this type of error, we conclude that our results are significantly correct when they’re not.

The probability of making this type of error is represented by the alpha or ‘α’ you choose, which is the p-value. For example, a p-value of 0.02 reveals a 2% chance that you may reject the actual null hypothesis wrong. You can reduce this probability of committing a type 1 error achieving by lowering the value for p. For example, a p-value of 0.01 indicates that there may be a 1% chance of committing an error.

Example for Type 1 Error

Let’s say that you’re convinced the Earth is flat and want to prove it to others. Your null hypothesis, in this case, will be: The earth is not flat.

To nullify this hypothesis, you walk on a plain surface for a few days, noticing that hey – it does look and feel flat when walking, so it must be flat and not a sphere.

You, therefore, reject your null hypothesis and tell everyone that the earth is, in fact, flat. This example is a simple explanation of the Type 1 Error. Although type 1 errors are a little more complex in real life than the example used, this is what the error looks like.

In such cases, your goal is to minimize the chances of the type 1 error. For instance, here, you could have minimized your probability of type 1 error by reading some scientific journals about the earth’s shape.

Is the Statistics assignment pressure too much to handle?

How about we handle it for you?

Put in the order right now in order to save yourself time, money, and nerves at the last minute.

Understanding Type 2 Errors

Typ2 errors are also called false negatives. Type 2 errors occur when a hypothesis test rejects the null hypothesis and makes a false assumption. Beta or the “β” determines the probability of making a type 2 error. Beta is related to the power of the statistical test, i.e., power=1-β

A high test power can reduce the probability of committing a type 2 error.

Reducing Type 2 Errors

The only option to reduce a Type 2 error is by minimizing its probability. Since a type 2 error is closely related to the power of the test, increasing the test power can reduce these types of errors. So, how do we do that?

- Increasing Sample Size: The simplest way to increase the power of the test is by increasing the sample size of the analysis. The greater the sample size is, the greater the chances to note the differences in the statistical test. Running the test for a longer duration and gathering more extensive data can help you make an accurate decision with your results.

- Increasing Significant Levels: A higher significant level indicates a higher chance of nullifying the null hypothesis when it is true.

Examples of a Type 2 Error

Suppose a pharmaceutical company is testing how effective two new vaccines for COVID-19 are in developing antibodies. The null hypothesis states that both the vaccines are equally effective, whereas the alternate hypothesis states that there is varying effectiveness between the two vaccines.

To test this hypothesis, the pharmaceutical company begins a trial to compare the two vaccines. It divides the participants, giving Group A one of the vaccines and Group B the other vaccine.

The beta is calculated to be 0.03 (or 3%). This means that the chances of committing a type 2 error are 97%. The null hypothesis should be rejected if the vaccines are not equally effective. However, if the null hypothesis is not rejected, a type 2 error occurs, indicating a false-negative error.

Which is Worse – Type 1 or Type 2 Error?

The simple answer is – it depends entirely on the statistical situation.

Suppose you design a medical screening test for diabetes. A Type 1 error in this test may make the patient believe they have diabetes when they don’t. However, this may lead to further examinations and ultimately reveal that the patient does not have the illness.

In contrast, a Type 2 error may give the patient the wrong assurance that they do not have diabetes, when in fact, they do. As a result, they may not go for further examinations and treat the illness. This may lead to several problems for the patient.

In this case, if you were to choose which type of error is ‘less dangerous,’ you’d opt for Type 1 error.

Now let’s take a scenario of a courtroom where a person is being suspected of committing murder. A type 1 error in this situation would prove the suspect is innocent when they’re not. Whereas a type 2 error would prove, the suspect is guilty when they’re innocent. Not very fair, is it? In this scenario, one can argue that a type 1 error may cause less harm than a type 2 error.

FAQs About Type 1 and Type 2 Errors

Type 1 errors are false-positive and occur when a null hypothesis is wrongly rejected when it is true. Wheres, type 2 errors are false negatives and happen when a null hypothesis is considered true when it is wrong.

Choosing a lower significant value can minimize Type 1 errors. This significance level is usually kept at 0.05, which means that your results will have a 5% chance of a type 1 error.